- Latest model tops all chinese rivals and ranks ahead of OpenAI and Google in blind testing

- Strong results in complex web-development tasks highlight intensifying global race in AI programming

Alibaba Group’s latest large language model Qwen 3.6-Plus has surged to second place worldwide in a leading AI coding benchmark, marking a rare moment when a Chinese-developed system outperformed offerings from several US tech giants in independent testing.

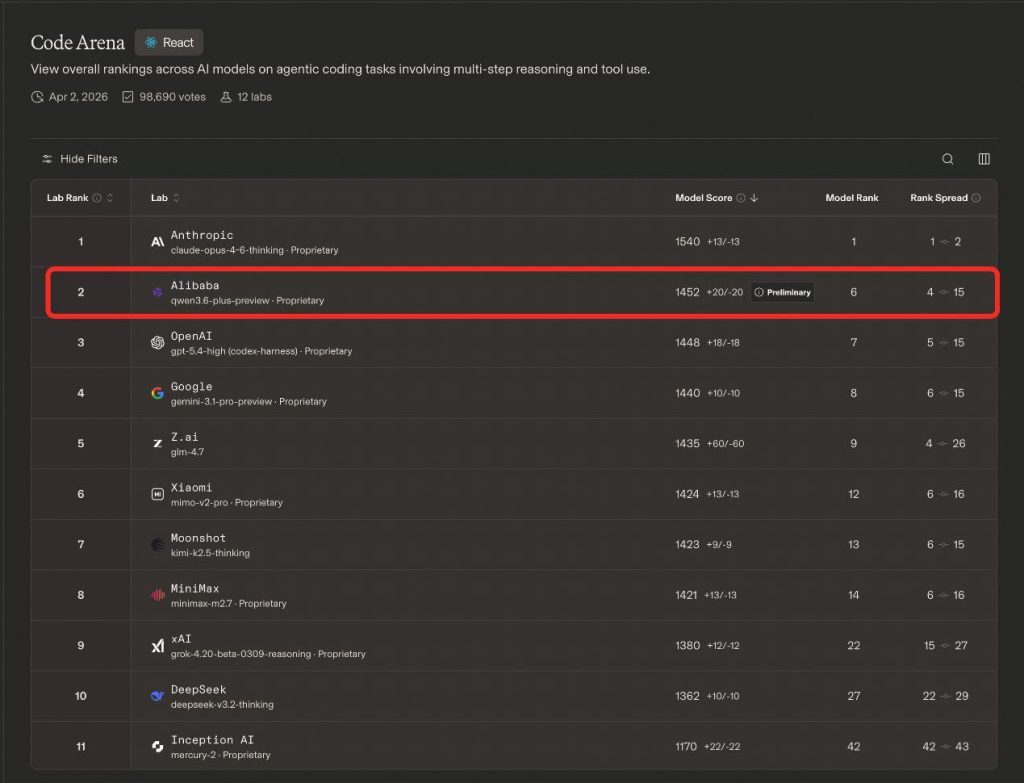

Results released April 3 by LMArena’s Code Arena — a widely watched blind-evaluation leaderboard focused on programming performance — showed Alibaba’s Qwen 3.6-Plus ranking just behind Anthropic’s Claude-Opus-4.6-Thinking, while surpassing models from OpenAI, Google and Elon Musk’s xAI.

LMArena is regarded as one of the industry’s most credible benchmarking platforms because rankings are generated through anonymous real-user comparisons and live head-to-head evaluations rather than controlled vendor testing, making the leaderboard a closely followed proxy for real-world model capability.

According to the latest ranking, Claude-Opus-4.6-Thinking led the table with a score of 1540, followed by Qwen 3.6-Plus at 1452. OpenAI’s GPT-5.0-High scored 1448, while Google’s Gemini 3.1 Pro Preview posted 1440, placing Alibaba’s model ahead of both by narrow margins.

The performance made it the highest-ranked Chinese model on the global list.

The model’s strongest showing came in the React-focused sub-ranking, considered among the most demanding evaluations in AI coding.

Unlike traditional code-completion tests, the benchmark assesses whether models can independently execute full software engineering workflows — from project setup and architecture design to debugging and deployment — in realistic web development environments.

Alibaba said the system achieved higher programming performance than several competing models with two to three times its parameter size, underscoring a broader industry shift toward efficiency gains rather than sheer scale.

Qwen 3.6-Plus was officially released on April 2 as the first model in Alibaba’s new Qwen 3.6 series. The company said the model features native multimodal understanding, stronger reasoning capabilities and improved agent-based task execution, with coding emerging as a key focus area.

The strong benchmark performance also lifted Alibaba to fourth place among global AI laboratories tracked by Code Arena rankings, behind Anthropic, OpenAI and Google.

Alibaba added that additional models in the Qwen 3.6 lineup, including open-source variants and a more powerful flagship version dubbed Qwen 3.6-Max, are expected to launch soon as the company accelerates its push into advanced AI infrastructure and developer tools.